Transformers: Attention Is All You Need

Transformers have become a fundamental building block in many areas of Artificial Intelligence (AI), particularly in tasks involving natural language processing (NLP) and other sequential data. The Transformer network architecture was originally proposed by Google researchers in their ground breaking research paper “Attention Is All You Need” (available at https://arxiv.org/abs/1706.03762).

Here's why Transformers are so important:

1. Capturing Long-Range Dependencies: Unlike traditional recurrent neural networks (RNNs) that process information sequentially, transformers excel at capturing relationships between elements that are far apart in a sequence. This is crucial for tasks like machine translation, where understanding the context of a sentence requires considering words from both the beginning and the end.

2. Parallel Processing: Transformers can process different parts of a sequence simultaneously, making them significantly faster than RNNs, especially for long sequences. This parallel processing capability allows for efficient training and inference of large language models.

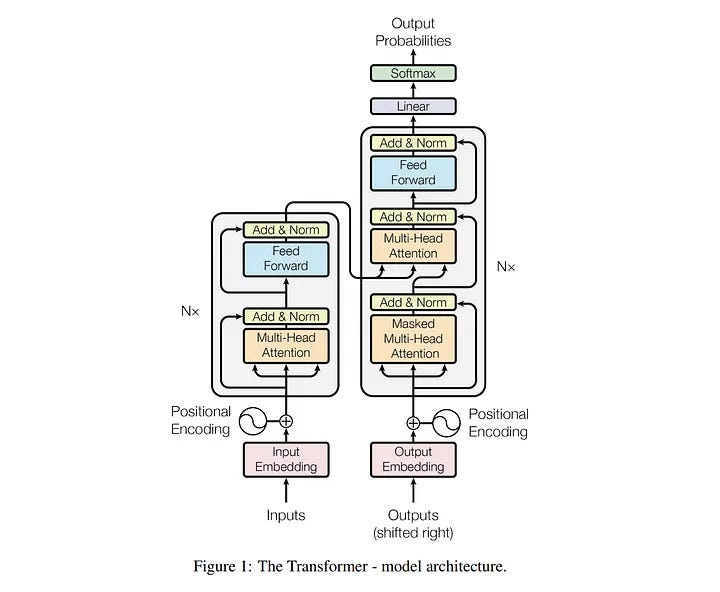

3. Improved Attention Mechanism: Transformers introduce the concept of attention, where the model focuses on specific parts of the input sequence that are most relevant to the current task. This allows the model to prioritize important information and make more accurate predictions.

4. Wide Range of Applications: The success of transformers has led to their application in various AI tasks beyond NLP. These include:

* Computer vision: Analyzing and understanding the relationships between different objects in an image.

* Speech recognition: Recognizing and interpreting spoken language, considering the context of the entire utterance.

* Time series forecasting: Predicting future values in a sequence of data points by understanding long-term dependencies.

* Protein structure prediction: Analyzing the relationships between amino acids in a protein sequence to predict its 3D structure.

Overall Impact:

Transformers have revolutionized AI by enabling the development of more powerful and versatile models. Their ability to capture long-range dependencies, efficient parallel processing, and attention mechanism have led to significant advancements in natural language processing and other areas that rely on sequential data. As research continues, transformers are expected to play an even greater role in shaping the future of AI.